News & Insights

Insights & Opinions

How Far Should a HAZOP Really Go?

There’s a pattern that has been gradually emerging in HAZOP studies over recent years. It’s not a change in methodology, but in how rigorously that methodology is being interpreted. Specifically, the tendency to follow consequence chains further and further away from the initiating cause.

In principle, this aligns with the rules. HAZOPs require the identification of unmitigated consequences, regardless of where they occur. If a deviation in one node can propagate through the system and ultimately impact equipment several units away, then it is valid to capture that outcome. But there is a distinction worth making between completeness and usefulness.

In practice, tracing long chains of consequences often leads to diminishing returns. The further removed the consequence is from the initiating event, the more safeguards are involved. While likelihood isn’t assessed in a traditional HAZOP, the team knows that these consequences are less likely than ones they have already considered.

This has a very real impact on how workshops are conducted. Time spent exploring unlikely cases with a multitude of protections diverts attention from more credible hazards. Teams can lose momentum, and the value of the discussion becomes diluted.

Attempts have been made to manage this by imposing limits. One common suggestion is to restrict consequence recording to adjacent units. While this creates a cleaner boundary, it introduces a different problem. It replaces engineering judgement with an arbitrary rule.

Process systems are not designed around neat boundaries, and neither are their failure modes. A rigid cut-off may exclude genuinely relevant hazards while still allowing less meaningful ones to be included. A more practical approach is to consider the credibility of the pathway.

When following a consequence chain, there is often a point where the same end scenario can be caused by a closer, more direct deviation. In most cases, that closer cause is not only more likely, but also associated with fewer safeguards. At that point, the original initiating event becomes a less significant contributor to the risk.

Continuing to track the original deviation beyond that point does not improve understanding. It simply duplicates analysis that is better addressed elsewhere in the study.

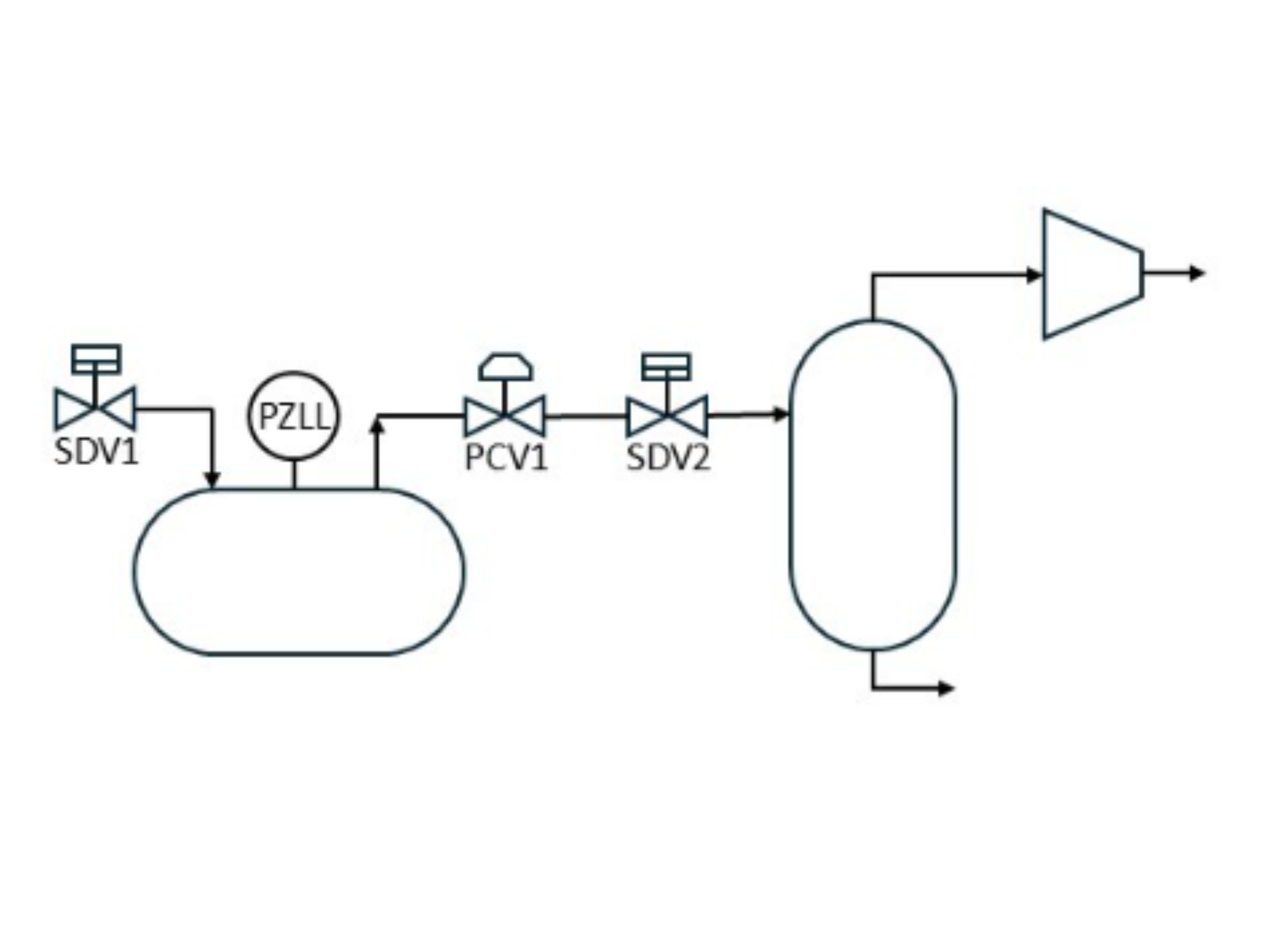

For example, in the illustration above, failure close of PCV1 and SDV2 would both be considered causes of compressor surge, but SDV1 would not as there are more likely causes closer to the compressor (e.g. PCV1) with less safeguards (e.g. PZLL).

This way of thinking is consistent with how risk is treated in more quantitative approaches such as LOPA, where multiple initiating events may contribute to the same outcome, but the focus remains on those that materially drive the risk.

The key difference is that HAZOP is qualitative. It relies on the judgement of the team to decide where to focus. That judgement is where the value lies.

HAZOPs are often treated as exhaustive exercises, where the objective is to document everything that could possibly occur. In reality, they are most effective when treated as prioritisation exercises. The aim is not to achieve theoretical completeness, but to think creatively to identify the scenarios that genuinely matter and understand them well.

This requires a level of discipline. It requires facilitators and teams to be comfortable drawing a line, even when the methodology allows them to continue. It also requires an understanding that more analysis is not always better analysis.

A well-run HAZOP is one where time is spent deliberately. Where discussions are grounded in credible scenarios. And where the outcome reflects a clear understanding of the system’s real risks, rather than an accumulation of increasingly remote possibilities.

By using our website you consent to all cookies in accordance with our Privacy Policy.